AI wrote the US Constitution, says AI content detector

If you were to feed some excerpts of the US Constitution into the AI detection tools, they would claim it to be written by AI tools like ChatGPT and Google Bard etc.

ZeroGPT seems 94% certain that AI wrote the Constitution, while originality.ai is 60% confident.

The Constitution was drafted in the year 1787, which is more than 200 years ago.

So, do you think it is possible for that old document to have AI origins?

Well, that can only be the case if either the writers themselves were robots or else used a time machine to reach the era of AI technology.

Jokes apart!

The claims are nothing but fallacious.

But still, for information, the first-ever concept of a time machine was given by H. G. Wells in 1895, long after the Constitution was written, and the development of robots is even more recent.

The question is, why do these tools seem to be too confident in labelling the year-old document as AI-generated?

Let’s look for its answer!

Understanding the Reason Behind False Positives by AI Content Detectors

Just like AI content generators, AI detectors are also trained on vast datasets deriving from various sources, the US Constitution being one of them. These datasets help them to differentiate between AI and human text.

When AI detectors are given text to analyse, they usually check the structure of the text, compare it to their knowledge, and conclude if they would have written in the same manner upon receiving the same query. If yes, then the tool simply classifies the text as AI written.

While analysing the structure of the text, AI detection tools usually use two different variables, namely perplexity & burstiness. The lower the percentage of these two variables detected in the text, the higher the chances are that the content is AI-generated.

Let’s first understand these two variables separately and how AI detectors sometimes misunderstand them, resulting in false positives.

Note: Know about 8 AI Copywriting Tools to Improve Efficiency

Perplexity

Perplexity is how random a text is.

Simply put, if the words in a sequence seem to be so unpredictable, the text has high perplexity.

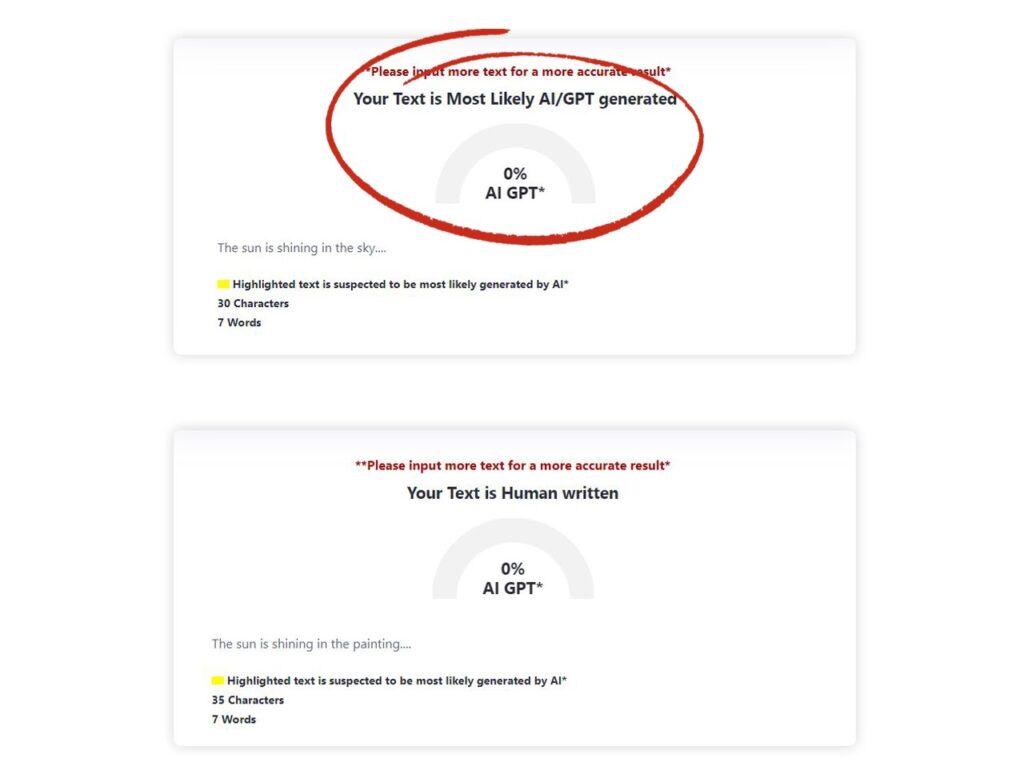

For example, if I say, “The sun is shining in the….”.

You will predict “sky” to be the next word in the sentence, as it does not sound uncertain or perplexing.

However, if I say my sentence would end up with the word “painting”, making it “The sun is shining in the painting.”, it may surprise you a little as it is not a common pattern found.

AI detectors work on the same line. If there is randomness in the text, the tool considers it as humanly written.

But if it demonstrates easy predictability of words in a sequence, the text is AI written according to the tool.

Image via test conducted on ZeroGPT.

Now, you must say that it is not necessary that a human will only use random words and not predictable ones.

Well, that is where AI detectors go wrong.

It is true that humans usually write with randomness, but that is not every time.

Sometimes we also use formal, usual, predictable and straightforward language that shows low perplexity, resulting in false positives from AI detectors.

Burstiness

Burstiness is the same as perplexity but on the sentence level.

Perplexity analyses the probable words, while burstiness measures sentence variations and structure and involves unpredictability in the cluster of sentences.

Human text is highly bursty as we tend to write with variations. For instance, our one sentence can be short while succeeding one be lengthy or vice versa. Similarly, we can write a complex sentence followed by a simple and easy sentence to keep it creative and engaging.

However, AI models usually maintain uniformity and consistency throughout the text. Their sentences usually have a regular length and do not possess many variations. This makes the text monotonous and is a sign of low burstiness.

However, here too, are exceptions, as in the case of perplexity.

Humans can also write in a highly structured and consistent style, especially when writing a formal document, for instance-the US Constitution.

In Conclusion, the Infallibility of AI Content Detectors is a Myth!

AI detectors labelling the US Constitution as AI generated have raised a question about their accuracy. In fact, this is not the only instance when AI content detection tool has provided false positives. Earlier, they have also been found to claim the Book of Genesis as AI written.

Several experts have questioned the reliability of AI detectors and expressed concerns about their vulnerabilities.

AI researcher Simon Willison tweeted his concerns towards AI detector products, stating, ” I think they’re mostly snake oil. Everyone desperately wants them to work—people in education especially—and it’s easy to sell a product that everyone wants, especially when it’s really hard to prove if it’s effective or not.

Similarly, Ethan Mollick, a professor at Wharton School, said that “There is no tool that can reliably detect ChatGPT-4/ Bing/ Bard writing. The existing tools are trained on GPT-3.5, they have high false positive rates (10%+), and they are incredibly easy to defeat. Also, you cannot ask the AI to identify AI writing either. We need to adapt.”

Research conducted by academics at the University of Maryland revealed the vulnerabilities of existing AI text detectors stating that even the best-possible detector can perform only marginally better than a random classifier.

For the watermark-based AI detector, the sturdy revealed that “an attacker can use a paraphraser to remove the LLM signatures from an AI-generated text to avoid detection” and can even spoof the watermark detector d to make genuine human text detected as AI-generated.

Thus eventually, there is no perfect formula exists that we can rely upon to differentiate between human-written and machine-generated text.

While AI writing detectors can make predictions, the margin for error is still there, making them unsuitable for delivering precise results.